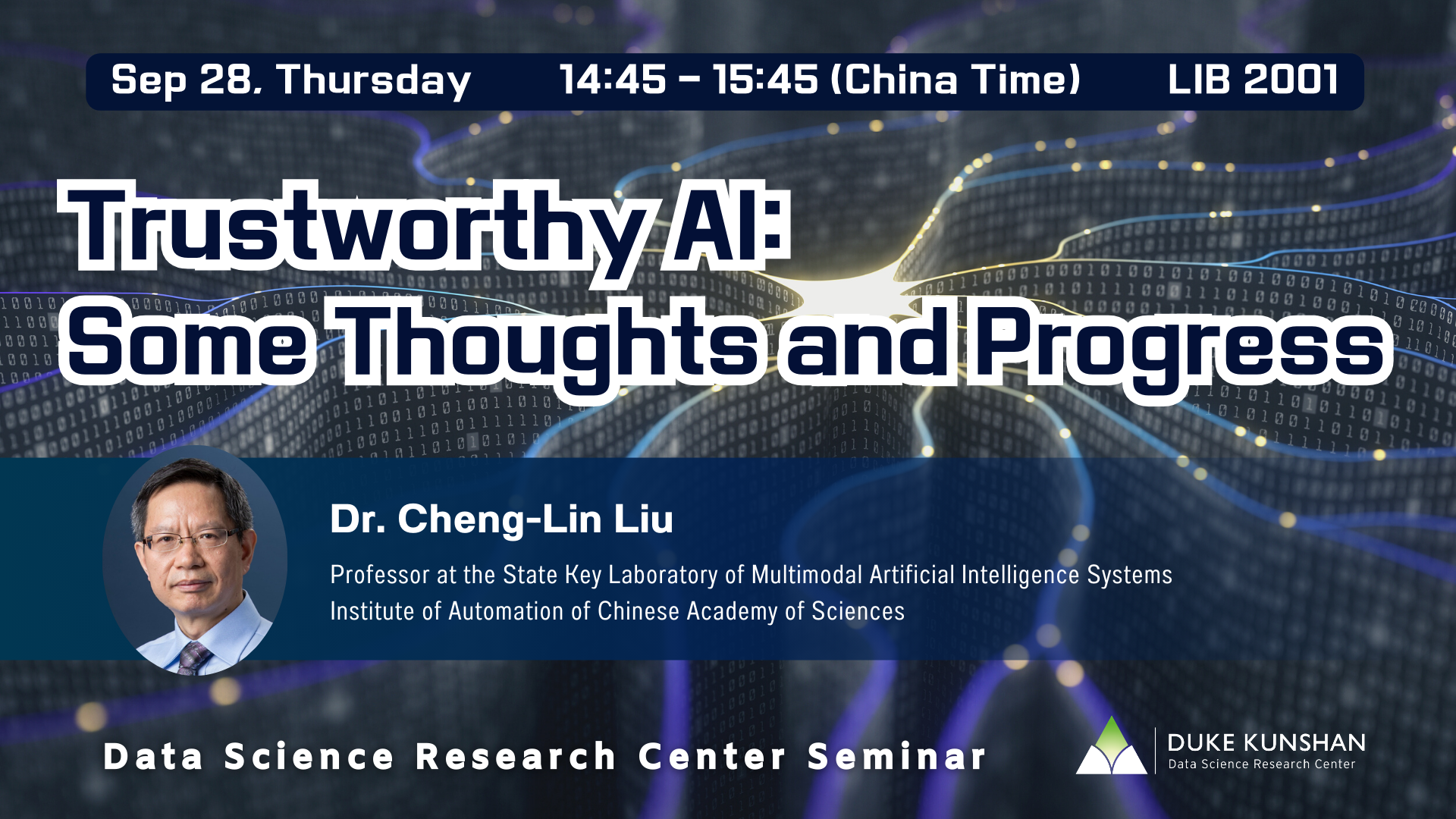

Abstract

Deep neural networks are dominating in various perception and cognition tasks of artificial intelligence (AI), but are heavily relying on large data training, and lacking in robustness, explainability and adaptability. Thus, AI applications in open environment still pose challenges, especially for scenarios with high reliability. In this talk, I will overview the status and limitations of current AI technology, and introduce some progress toward robust and trustworthy AI, including the works in explainable models, robust models, adaptation and confident learning. Finally, show the prospects of research in this direction.

Speaker Bio

Cheng-Lin Liu is a Professor at the State Key Laboratory of Multimodal Artificial Intelligence Systems, Institute of Automation of Chinese Academy of Sciences. He is a vice president of the Institute of Automation, a vice dean of the School of Artificial Intelligence, University of Chinese Academy of Sciences. He received the PhD degree in pattern recognition and intelligent control from the Chinese Academy of Sciences, Beijing, China, in 1995. He was a postdoctoral fellow in Korea and Japan from March 1996 to March 1999. From 1999 to 2004, he was a researcher at the Central Research Laboratory, Hitachi, Ltd., Tokyo, Japan. His research interests include pattern recognition, machine learning and document image analysis. He has published over 400 technical papers in journals and conferences. He is an Associate Editor-in-Chief of Pattern Recognition Journal and Acta Automatica Sinica, an Associate Editor of International Journal on Document Analysis and Recognition, Cognitive Computation, IEEE/CAA Journal of Automatica Sinica, Machine Intelligence Research, CAAI Trans. Intelligence Technology, CAAI Artificial Intelligence Research and Chinese Journal of Image and Graphics. He is a Fellow of the CAA, CAAI, the IAPR and the IEEE.